How does our brain learn from our mistakes? Does it prefer good news to bad news? These are the questions answered by a team of researchers led by Stefano Palminteri (Inserm-ENS), laureate of the ATIP-Avenir programme, from the Laboratoire de Neurosciences Cognitives. The results will be published in Nature Human Behaviour.

Generally speaking, humans tend to overestimate the likelihood of a positive event in the near future, whereas they underestimate that of a negative event. In cognitive psychology, this is known as the optimism bias. This and other cognitive biases influence our rational logic, our judgements and our decisions, and hence our behaviours. Optimism bias is a tendency to take “positive” information (good news) into account more than “negative” information (bad news). This basic asymmetry is assumed to generate and reinforce this bias, and leads us to believe that our future outlook is, on average, better than that of others. This has particularly been shown in heavy smokers who underestimate their risk of premature death, and in certain women who underestimate their risk of getting skin cancer.

A research team from the Laboratoire de Neurosciences Cognitives (LNC) wished to know more about this phenomenon and understand its origin. Is this phenomenon linked only to our beliefs regarding possible future events, or more generally, is it also present in any type of learning, including the most basic: learning by trial and error? To do this, the researchers studied behaviour in a group of people involved in a process of learning by trial and error, which consisted of choosing between two symbols associated with a monetary reward. Depending on the choice of the participant, the latter could win €0.50 (“good news”), win nothing, or lose €0.50 (“bad news”). Results demonstrated that the participants attributed 50% more importance, on average, to the “good news” than to the “bad news.” This general tendency of our brain to learn in an asymmetrical manner, preferring the good news and ignoring the bad news, may be the basis for the optimism bias.

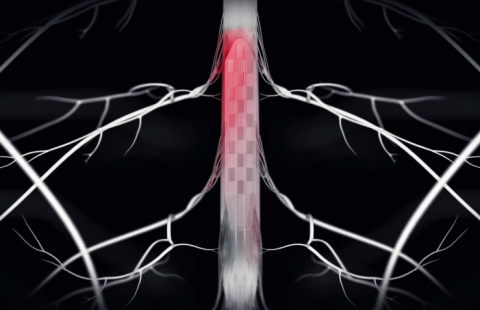

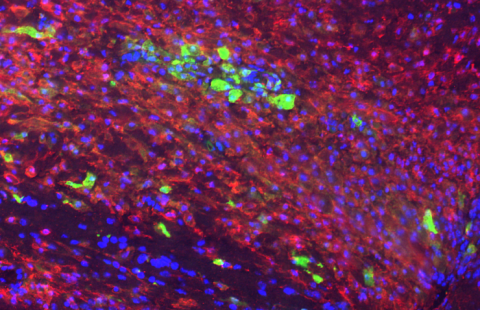

One question remained unanswered, that of the relationship between this deeply rooted learning bias and the brain reward circuits. To answer this question, the LNC researchers studied the brain activity of subjects carrying out the learning task just described, using functional magnetic resonance imaging (fMRI). According to Stefano Palminteri, who headed this study: “The brain activity recorded in the main structures of the brain reward circuit is almost twice as high in an optimistic subject compared to a more realistic subject, for the same monetary reward. This activity shows the existence of distinct profiles, more or less optimistic or realistic.”

Apart from providing a neuropsychological explanation for optimism, this work provides additional evidence for the existence of a deeply rooted learning bias in human cognition. The optimism bias could therefore be involved in psychopathologies such as depression (absence of the bias) or certain addictions (overexpression of the bias). “In order to better understand the cause and persistence of these behaviours, with their high social and human cost, it is therefore essential to study of these basic biases in the learning,” the authors of the study believe.

These contents could be interesting :

- Laboratoire de Neurosciences Cognitives, Institut National de la Santé et de la Recherche Médicale, 75005 Paris, France.

- Laboratoire d'Économie Mathématique et de Microéconomie Appliquée (LEMMA), Université Panthéon-Assas, 75006 Paris, France.

- Amsterdam Brain and Cognition (ABC), Nieuwe Achtergracht 129, 1018 WS Amsterdam, The Netherlands.

- Amsterdam School of Economics (ASE), Faculty of Economics and Business (FEB), Roetersstraat 11, 1018 WB Amsterdam, The Netherlands.

- INSERM-CEA Cognitive Neuroimaging Unit (UNICOG), NeuroSpin Centre, 91191 Gif sur Yvette, France.

- Institut Jean-Nicod (IJN), CNRS UMR 8129, Ecole Normale Supérieure, 75005 Paris, France.

- Institut d’Étude de la Cognition, Departement d’Études Cognitives, École Normale Supérieure, 75005 Paris, France.